Hadoop Mapper

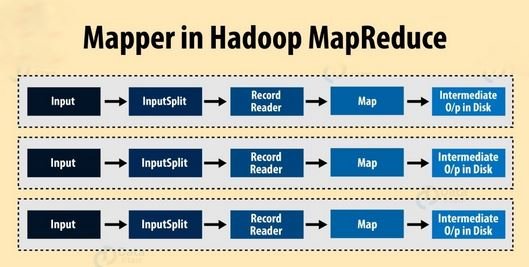

Hadoop Mapper task is the first phase of processing that processes each input record (from RecordReader) and generates an intermediate key-value pair. Hadoop Mapper store intermediate-output on the local disk. Mapper task processes each input record and it generates a new <key, value> pairs. The <key, value> pairs can be completely different from the input pair.

Before writing the output for each mapper task, partitioning of output take place on the basis of the key and then sorting is done. This partitioning specifies that all the values for each key are grouped together. MapReduce frame generates one map task for each InputSplit generated by the InputFormat for the job.

Hadoop Mapper only understands <key, value> pairs of data, so before passing data to the mapper, data should be first converted into <key, value> pairs.

How is key value pair generated in Hadoop?

Let us now discuss the key-value pair generation in Hadoop.

InputSplit

It is the logical representation of data. It describes a unit of work that contains a single map task in a MapReduce program.

RecordReader

It communicates with the InputSplit and it converts the data into key-value pairs suitable for reading by the Mapper. By default, it uses TextInputFormat for converting data into the key-value pair. RecordReader communicates with the Inputsplit until the file reading is not completed.

How does Hadoop Mapper work?

InputSplits converts the physical representation of the block into logical for the mapper. InputSpits do not always depend on the number of blocks, we can customize the number of splits for a particular file by setting mapred.max.split.size property during job execution.

RecordReader’s responsibility is to keep reading/converting data into key-value pairs until the end of the file. Byte offset (unique number) is assigned to each line present in the file by RecordReader. Further, this key-value pair is sent to the mapper. The output of the mapper program is called as intermediate data (key-value pairs which are understandable to reduce).

How many map tasks in Hadoop?

The total number of blocks of the input files handles the number of map tasks in a program. For maps, the right level of parallelism is around 10-100 maps/node, although for CPU-light map tasks it has been set up to 300 maps. Since task setup takes some time, so it’s better if the maps take at least a minute to execute.

For example, if we have a block size of 128 MB and we expect 10TB of input data, we will have 82,000 maps. Thus, the InputFormat determines the number of maps.

Hence, No. of Mapper= {(total data size)/ (input split size)}

For example, if data size is 1 TB and InputSplit size is 100 MB then,

No. of Mapper= (1000*1000)/100= 10,000