Asymptotic Notation

In this tutorial, we are going to discuss about the Asymptotic Notation. Whenever we want to perform analysis of an algorithm, we need to calculate the complexity of that algorithm. But when we calculate complexity of an algorithm it does not provide exact amount of resource required.

So instead of taking exact amount of resource we represent that complexity in a general form (Notation) which produces the basic nature of that algorithm. We use that general form (Notation) for analysis process. Asymptotic notation of an algorithm is a mathematical representation of its complexity.

Note

In asymptotic notation, when we want to represent the complexity of an algorithm, we use only the most significant terms in the complexity of that algorithm and ignore least significant terms in the complexity of that algorithm (Here complexity may be Space Complexity or Time Complexity). For example, consider the following time complexities of two algorithms.

- Algorihtm 1: 6n2 + 3n + 2

- Algorihtm 2: 9n2 + 8n + 3

Generally, when we analyze an algorithm, we consider the time complexity for larger values of input data (i.e. ‘n’ value). In above 2 time-complexities, for larger value of ‘n’ the term in algorithm 1 ‘3n + 2’ has least significance than the term ‘6n2’, and the term in algorithm 2 ‘8n + 3’ has least significance than the term ‘9n2’.

Here for larger value of ‘n’ the value of most significant terms (6n2 and 9n2) is very larger than the value of least significant terms (3n + 2 and 8n + 3). So, for larger value of ‘n’ we ignore the least significant terms to represent overall time required by an algorithm. In asymptotic notation, we use only the most significant terms to represent the time complexity of an algorithm. Majorly, we use THREE types of Asymptotic Notations and those are as follows

1. Big – Oh (O)

2. Big – Omega (Ω)

3. Big – Theta (T)

1. Big – Oh Notation (O)

Big – Oh notation is used to define the upper bound of an algorithm in terms of Time Complexity. That means Big – Oh notation always indicates the maximum time required by an algorithm for all input values. That means Big – Oh notation describes the worst case of an algorithm time complexity.

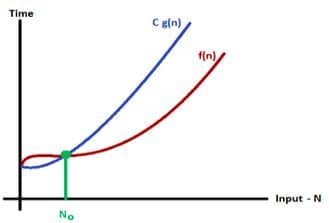

Consider function f(n) the time complexity of an algorithm and g(n) is the most significant term. If f(n) <= C g(n) for all n >= n0, C > 0 and n0 >= 1. Then we can represent f(n) as O(g(n)). For example, if f(n) = n4 + 50n2 + 6n + 5 is the given algorithm, then n4 is g(n). That means g(n) gives the maximum rate of growth for f(n) at larger values of n. Consider the following graph drawn for the values of f(n) and C g(n) for input (n) value on X-Axis and time required is on Y-Axis.

In above graph after a particular input value n0, always C g(n) is greater than f(n) which indicates the algorithm’s upper bound.

Consider the following f(n) and g(n).

f(n) = 3n + 2

g(n) = nIf we want to represent f(n) as O(g(n)) then it must satisfy f(n) <= C x g(n) for all values of C > 0 and n0>= 1

f(n) <= C g(n) ?3n + 2 <= C n Above condition is always TRUE for all values of C = 4 and n >= 2. By using Big – Oh notation we can represent the time complexity as 3n + 2 = O(n).

2. Big – Omega Notation (Ω)

Big – Omega notation is used to define the lower bound of an algorithm in terms of Time Complexity. That means Big – Omega notation always indicates the minimum time required by an algorithm for all input values. That means Big – Omega notation describes the best case of an algorithm time complexity.

Consider function f(n) the time complexity of an algorithm and g(n) is the most significant term. If f(n) >= C x g(n) for all n >= n0, C > 0 and n0 >= 1. Then we can represent f(n) as Ω(g(n)). For example, if f(n) = 100n2 + 10n + 50, g(n) is Ω(n2). Consider the following graph drawn for the values of f(n) and C g(n) for input (n) value on X-Axis and time required is on Y-Axis.

Example

Consider the following f(n) and g(n).

f(n) = 3n + 2

g(n) = nIf we want to represent f(n) as Ω(g(n)) then it must satisfy f(n) >= C g(n) for all values of C > 0 and n0>= 1

f(n) >= C g(n) ?3n + 2 <= C n Above condition is always TRUE for all values of C = 1 and n >= 1. By using Big – Omega notation we can represent the time complexity as 3n + 2 = Ω(n).

3. Big – Theta Notation (Θ)

Big – Theta notation is used to define the average bound of an algorithm in terms of Time Complexity. That means Big – Theta notation always indicates the average time required by an algorithm for all input values. That means Big – Theta notation describes the average case of an algorithm time complexity.

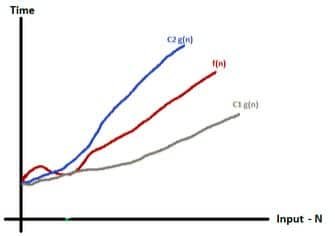

Big – Theta Notation can be defined as “Consider function f(n) the time complexity of an algorithm and g(n) is the most significant term. If C1 g(n) <= f(n) >= C2 g(n) for all n >= n0, C1, C2 > 0 and n0 >= 1. Then we can represent f(n) as Θ(g(n)).”

Consider the following graph drawn for the values of f(n) and C g(n) for input (n) value on X-Axis and time required is on Y-Axis.

In above graph after a particular input value n0, always C1 g(n) is less than f(n) and C2 g(n) is greater than f(n) which indicates the algorithm’s average bound.

Example

Consider the following f(n) and g(n).

f(n) = 3n + 2

g(n) = nIf we want to represent f(n) as T(g(n)) then it must satisfy C1 g(n) <= f(n) >= C2 g(n) for all values of C1, C2 > 0 and n0>= 1

C1 g(n) <= f(n) >=

⇒ C2 g(n)

C1 n <= 3n + 2 >= C2 nAbove condition is always TRUE for all values of C1 = 1, C2 = 4 and n >= 1. By using Big – Theta notation we can represent the time complexity as 3n + 2 = Θ(n).

Guidelines for Asymptotic Analysis

There are some general rules to help us determine the running time of an algorithm.

1. Loops: The running time of a loop is, at most, the running time of the statements inside the loop (including tests) multiplied by the number of iterations.

// executes n times

for (int i=1; i<=n;i++)

a = a +4; // constant time

Total time = a constant c * n = c * n = O(n).2. Nested loops: Analyze from the inside out. Total running time is the product of the sizes of all the loops.

// outer loop executed n times

for (int i=1; i<=n;i++)

// inner loop executed n times

for (int j=1; j<=n;j++)

a = a +4; // constant time

Total time = c * n * n= cn2 = O(n2).3. Consecutive statements: Add the time complexities of each statement.

a = a + 2; // constant time

// executes n times

for (int i=1; i<=n;i++)

m = m * 2; // constant time

// outer loop executed n times

for (int i=1; i<=n;i++) {

// inner loop executed n times

for (int j=1; j<=n;j++)

a = a +4; // constant time

}

Total time = c0 +c1 n + c2n2 = cn2 = O(n2).4. If-then-else statements: Worst-case running time: the test, plus either the then part or the else part (whichever is the larger).

//test: constant

//test: constant

if(length() == 0) {

return false; // then part: constant

} else { // else part: (constant + constant) * n

for(int i = 0; i<length();i++) {

//another if: constant + constant (no else part)

if(!list[i].equals(otherList.list[i]))

// constant

return false;

}

} 5. Logarithmic complexity: An algorithm is O(logn) if it takes a constant time to cut the problem size by a fraction (usually by 1/2). As an example, let us consider the following program.

for(int i=0; i<=n;)

i = i *2;If we observe carefully, the value of i is doubling every time. Initially i = 1, in next step I = 2, and in subsequent steps i = 4,8 and so on. Let us assume that the loop is executing some k times. At kth step 2k = n, and at (k + 1) th step we come out of the loop. Taking logarithm on both sides, gives

log(2k) = logn

klog2=logn

k=logn // if we assume base 2

Total time = O(logn).Similarly, for the case below, the worst case rate of growth is O(logn). The same discussion holds good for the decreasing sequence as well.

for(int i=0; i<=n;)

i = i/2;Recursive Algorithm

In computer science, all algorithms are implemented with programming language functions. We can view a function as something that is invoked (called) by another function. It executes its code and then returns control to the calling function. Here, a function can call themselves (by itself) or it may call another function which again call same function inside it.

The function which calls by itself is called as Direct Recursive function (or Recursive function). A recursive algorithm can also be defined as follows.

The function which calls a function and that function calls its called function is called Indirect Recursive function (or Recursive function).

Running Time Analysis

Running Time Analysis is the process of determining how processing time increases as the size of the problem (input size) increases. Input size is the number of elements in the input, and depending on the problem type, the input may be of different types. The following are the common types of inputs.

- Size of an array

- Polynomial degree

- Number of elements in a matrix

- Number of bits in the binary representation of the input

- Vertices and edges in a graph.

The rate at which the running time increases as a function of input is called rate of growth. Let us assume that you go to a shop to buy a car and a bicycle. If your friend sees you there and asks what you are buying, then in general you say buying a car. This is because the cost of the car is high compared to the cost of the bicycle (approximating the cost of the bicycle to the cost of the car).

Total cost = cost of the car + cost of the bicycle

Total cost ≈ cost of the car (approximation...) For the above-mentioned example, we can represent the cost of the car and the cost of the bicycle in terms of function, and for a given function ignore the low order terms that are relatively insignificant (for large value of input size, n). As an example, in the case below, n4, 2n2, 100n and 500 are the individual costs of some function and approximate to n4 since n4 is the highest rate of growth.

n4+2n2+100n+500 ≈ n4That’s all about the Asymptotic Notation. If you have any queries or feedback, please write us email at contact@waytoeasylearn.com. Enjoy learning, Enjoy Data Structures.!!